High Bandwidth Connectivity Using 12X InfiniBand DDR Cables

12X InfiniBand DDR cables are high bandwidth copper interconnects used in high performance computing clusters and enterprise data center fabrics. These assemblies connect switches, servers, and storage systems using a wide port InfiniBand interface that aggregates multiple lanes into a single connector. Designed for short reach communication, they provide low latency and stable signaling in dense environments where predictable node to node performance is required.

12X InfiniBand Cable Architecture

The 12X InfiniBand interface combines multiple differential signaling pairs within a single connector to deliver high aggregate bandwidth between devices. Each cable routes grouped lanes through controlled impedance copper conductors to maintain consistent electrical performance across the link.

This architecture enables efficient point to point connectivity between switches and compute nodes. By consolidating multiple lanes into one connector, it reduces port complexity while preserving high throughput communication.

DDR Signaling and Data Throughput

Double Data Rate signaling transmits data on both the rising and falling edges of the clock cycle. This effectively increases throughput without requiring higher clock frequencies, helping maintain manageable signal timing and noise characteristics.

To support DDR signaling, 12X cable assemblies use precise conductor geometry, shielding, and grounding structures. These design elements help maintain impedance stability and reduce crosstalk between adjacent differential pairs.

Signal Integrity and Electrical Performance

Reliable InfiniBand communication depends on maintaining signal quality across all lanes. At DDR speeds, even minor variations in impedance or shielding can affect link stability.

Key considerations include:

- Controlled impedance across all differential pairs

- Minimal crosstalk between adjacent lanes

- Effective shielding against external interference

- Stable conductor geometry to reduce signal distortion

Maintaining these parameters ensures consistent link training and reliable data transmission in demanding environments.

Role in HPC and Data Center Fabrics

In high performance computing environments, InfiniBand fabrics interconnect large numbers of compute nodes. These systems rely on low latency communication for workloads such as simulations, analytics, and machine learning.

12X InfiniBand DDR cables provide the short reach connections that link switches to servers and storage systems within racks or between adjacent racks. Their wide port design simplifies topology by enabling high bandwidth links without multiple separate cables.

Mechanical Design and Serviceability

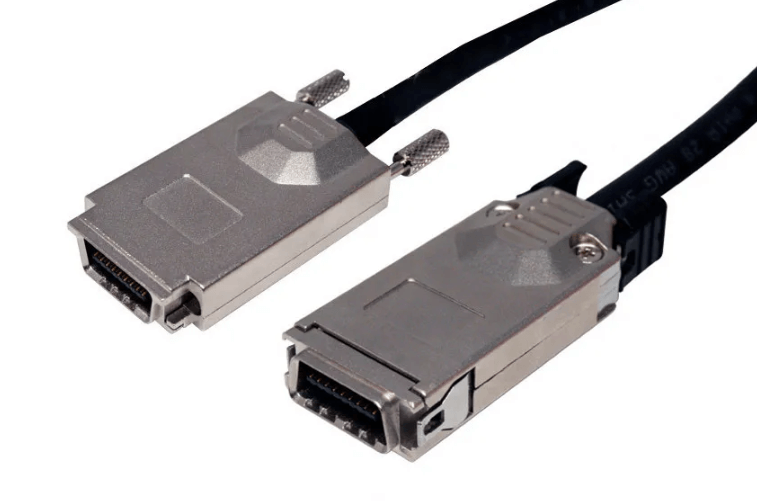

Many 12X InfiniBand cables feature ejector style connector mechanisms that allow quick insertion and removal in densely populated switch environments. This design improves retention while enabling efficient maintenance and hardware replacement.

The connector structure is optimized for repeated use, making it suitable for environments where system components are frequently upgraded or reconfigured.

Typical Deployment Environments

12X InfiniBand DDR cables are commonly used in:

- High performance computing clusters

- Large scale parallel processing systems

- Data center InfiniBand switching fabrics

- GPU compute clusters and AI training environments

- Research and scientific computing facilities

These environments require high bandwidth and consistent latency across interconnected nodes.

Installation and Routing Considerations

Maintaining proper bend radius and avoiding excessive strain on connectors are essential for long term reliability. Cable routing should support airflow and allow access to connector release mechanisms.

Organized cable management and clear labeling simplify troubleshooting and reduce the risk of accidental disconnection during maintenance operations.

FAQ (Frequently Asked Questions)

What does 12X mean in InfiniBand cabling?

It refers to a wide port configuration that aggregates multiple InfiniBand lanes into a single connector for higher bandwidth.

What is DDR InfiniBand signaling?

DDR transmits data on both edges of the clock signal to increase throughput.

Where are 12X InfiniBand cables typically used?

They are used in high performance computing clusters and data center fabrics.

Are 12X InfiniBand cables used for long distance connections?

No, they are intended for short reach copper interconnects within racks or between nearby equipment.